KINECT/EQ INTERFACE

The effects of Microsoft Kinect-based Interface on Live Guitar Performance (Master's Project)

Final paper (mirror) |

User manual (mirror)

KINECT/EQ INTERFACE

The effects of Microsoft Kinect-based Interface on Live Guitar Performance (Master's Project)

Final paper (mirror) |

User manual (mirror)

OVERVIEW

For my Master's project, I wrote an app that allows you to change equalizer levels via Microsoft Kinect's

skeleton tracker. Unlike the more conventional binary on/off controls seen in foot pedals, the app provided a

novel way to allow you to have continuous levels of control to guitar effects using gestures, all while playing a live guitar.

Kinect/EQ Interface hardware setup

After gathering feedback from early versions of the app through interviews and rapid iterative prototyping, I conducted a study of the final version to measure the app's perceived usefulness.

IMPLEMENTATION

The final application, which I dubbed the "Kinect/EQ Interface", is a Windows app that enables you to change equalizer levels

in real-time with acceptable accuracy while playing your acoustic guitar. I wrote the app using C# with Kinect SDK,

Max for Ableton Live patches, and Open Sound Control.

![]()

Head tracking movement and corresponding direction of real-time knob level adjustment

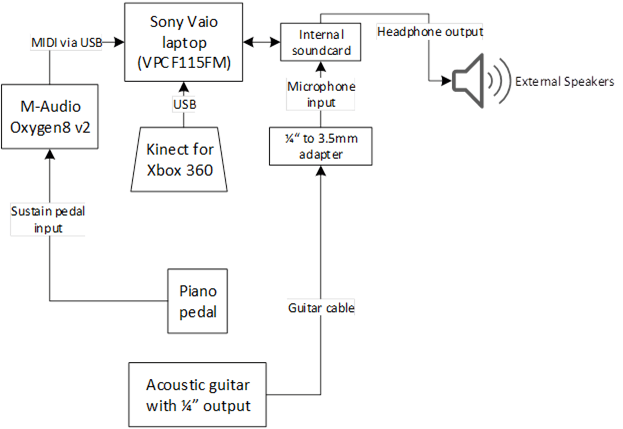

The hardware implementation of the Kinect/EQ Interface consists of the following parts and signal flow:

Hardware signal flow

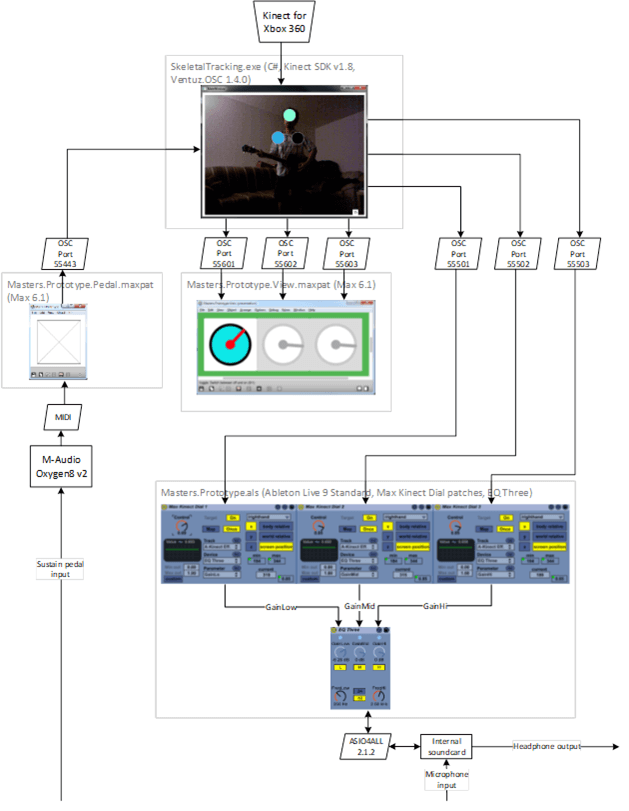

The software implementation of the Kinect/EQ Interface consists of the following parts and architecture:

Software architecture

STUDY

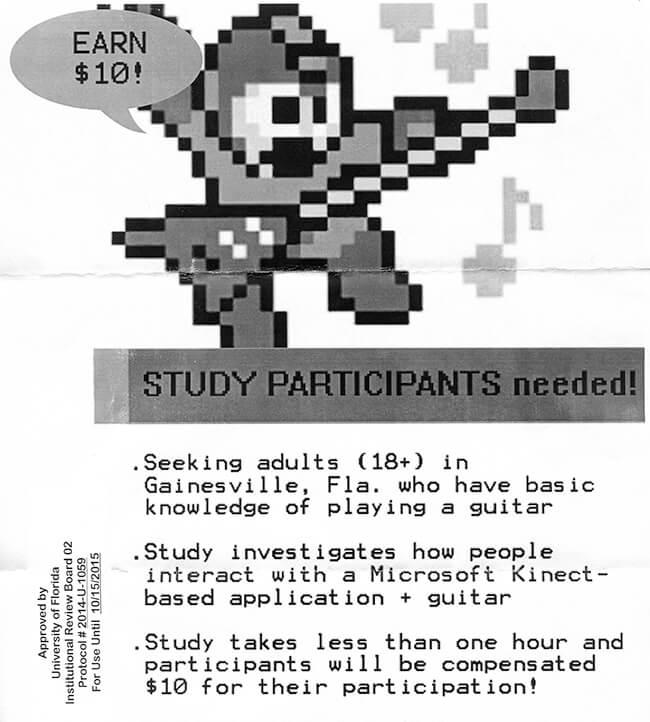

To measure the perceived usefulness of the Kinect/EQ Interface, I conducted an IRB-approved study at the

University of Florida School of Music.

Flyer for the study

29 participants went through the same procedure

RESULTS

The results of the study were as follows:

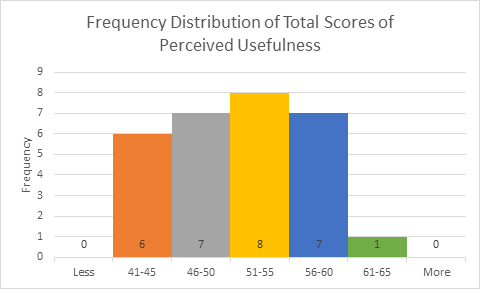

Histogram of perceived usefulness total scores

Although the mean of the data was greater than 50, with a p-value of 0.35, it was not significant. Hence, it cannot be concluded that users in general will perceive the Kinect/EQ Interface to be useful during their live performance.

SUMMARY

I started this Master's project with the observation of the inaccessibility of continuous controls during performance

due to its existing form factors, and I developed the Kinect/EQ Interface app as a novel way to address that.

I then ran a study to measure the app's perceived usefulness during live guitar performance.

This project took me several semesters to complete, so there's a lot of details I left out. To view more information

about the project regarding its motivation, research, interviews, prototypes, implementation specifics, as well as the study

procedure, discussions, and future work, you can download the

final paper

(mirror).